Sky News science and technology editor Tom Clarke had concerns when it was first suggested he create an AI reporter. Were his bosses asking him to put himself out of a job?

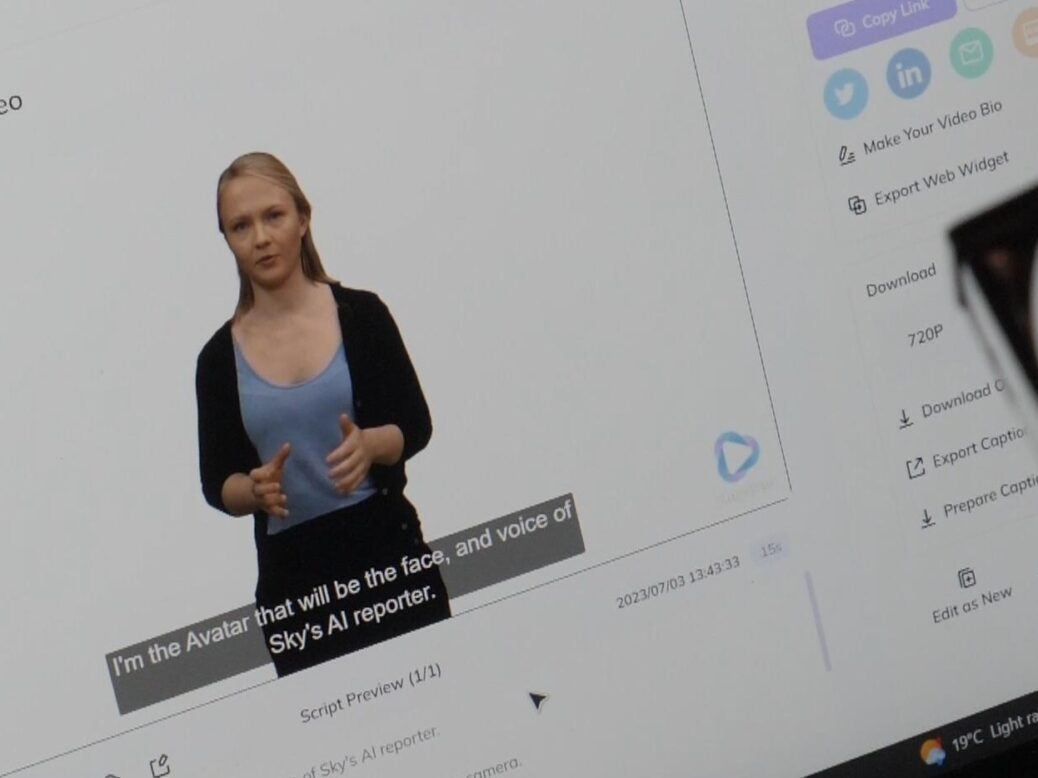

He quickly found, however, that although the “robot” reporter – the face and voice of which was based on Sky News producer Hanna Schnitzer – was better in some ways than Clarke first expected, he could breathe easy for now.

Clarke told Press Gazette the AI-generated reporter was “definitely better than I thought… it was perfectly decent, but it didn’t have any flair, any spark”. Crucially, although it could identify problems and issues from the news it had been given for training purposes, it “can’t put its finger on why that problem is happening or anything like that, because it doesn’t have an awareness of the world around it”.

“We are a very long way from AIs having that,” he said. “So, I think that whole question about will it replace the human role there – absolutely not.”

Clarke created the AI reporter, which pitched stories to an AI editor in an AI feedback loop, with help from Norwegian Youtuber and coder Kris Fagerlie, using different versions of ChatGPT and other publicly available AI software. The results of the experiment have been published in a story but not otherwise used to generate any content for Sky News.

The aim was to portray the potential real-world consequences of generative AI to the Sky News audience in a way that would highlight “what all the fuss is about. Why this technology is of such interest to not just journalists, but the world of work in general, and society in general,” Clarke said.

AI reporter’s major ‘red flag’

Clarke, who joined Sky News in 2021, and his team let the AI reporter crawl news stories and suggest its own. It pitched eight story ideas to the AI editor within 20 minutes and went ahead with one about the impact of heatwaves on public health.

Like others, Clarke discovered that the main limitation was the AI’s less than stellar accuracy rate, noting that, although on some occasions it was pretty solid, other times its responses were “a lot more quirky, weird, error-prone”.

He said this was a “very important learning if you were starting to even think about relying on these tools to replace tasks or roles in journalism or other professions. We’ve got to get on top of that as a question as well. Is it going to perform the same each time? And I think we found that it didn’t.”

Ultimately the AI reporter ended up generating a fabricated news story that appeared to conflate an article about a milk lorry crashing on the M6 with stories about the environment and a made-up academic study from the US showing that milk binds grit together and makes the road less sticky. The pitch stated: “From laughing stock to eco-friendly solution: Could spilling milk be key to safer highways?”

Clarke said: “What to me was dangerous about that was it was actually quite plausible. It was trying so hard to satisfy the prompt you gave it, it came up with quite plausible reasons why and certainly as a science journalist… something that can trick you in that way is a more dangerous form of lying than just brazen bias and misinformation.

“I think that’s a really big red flag for how we use these things because they’re programmed to satisfy the questions you give them, you have to be extra careful about the results you get – more so than with a Google search or the traditional tools we use as journalists to find out information and research our stories.”

[Generative AI and The Guardian: ‘What we do can’t be reproduced synthetically’]

Clarke, the former science editor of ITV News and Channel 4 News, was nevertheless impressed with the AI reporter’s “ability to create natural language in the style of whatever you choose”, whether doing a “really good approximation” of a news report for the Sky News website or a TV news script.

“It’s TV script read a lot like any TV script I would have written. That really, really impressed me,” Clarke said.

He was also impressed by its ability to find “the right kind of people who actually were real experts with academic affiliations” and their email addresses, when prompted to find experts for stories. “Again, that was a skill I wouldn’t necessarily have expected to see. It did get it wrong from time to time, but more often than not, it did a pretty good job of that.”

Sky News has no plans to keep using its new AI reporter-editor duo. Clarke said: “I think one thing our project definitely showed is no way would you use a large language model to replace a journalist to do news, to make important decisions about anything, any editorial. But it was obvious it certainly presents efficiencies.”

Those could include, he suggested, speeding up research and finding experts – although he warned AI “would maybe be a shortcut or a helping hand doing that, [but] you wouldn’t want to rely solely on its choices”.

Journalists have already “happily” adopted AI-driven transcription software into their workflows, he added, “without panicking about whether we were giving AI too much role in our lives. It’s just a tool. And likewise, I think it’s a very sensible position now to say yes, these tools will inevitably work their way into journalism. What AI is allowed to do is entirely up to us. The AI is in no way smart enough yet to take over…”

ITV News’ own AI reporter experiment

ITV News has also recently experimented with creating an AI version of one of its journalists – correspondent Rachel Younger who said the “deepfake”, made in just half an hour, looked so good that at a glance her boss thought it was actually her.

Introducing Press Gazette editor-in-chief Dominic Ponsford’s Tom Olsen lecture at journalists’ church St Bride’s last week, Younger said: “For me, the moment I understood, I think, or started to, the pitfalls and the potential of AI was when I saw myself, or a type of myself, on screen, speaking words I’d never spoken and answering questions I’d never even thought to ask. Worse still, my boss walked past my laptop, took one look at the screen and said ‘Oh, lovely piece to camera Rachel’, which is the moment when I wondered whether I’d managed to do myself out of a job.

“Now, when we looked at that deepfake, and it was the most basic one, of course, it wouldn’t have fooled my boss or anyone else for much longer, and dug into the speed at which the technology is moving, it got us thinking about what the same technology could do in the wrong hands…

“As journalists, that makes things increasingly difficult for us because who are we if not the people who are there to tell you the difference between fact and fiction. What’s becoming clear is that’s becoming increasingly harder.”

Email pged@pressgazette.co.uk to point out mistakes, provide story tips or send in a letter for publication on our "Letters Page" blog