Facebook has revealed for the first time how it decides what “problematic or low-quality” content should be demoted in its News Feed.

The social media giant has often been criticised for not being transparent enough with decisions about what posts it effectively hides from users.

It has now published its content distribution guidelines outlining the types of posts that receive “reduced distribution”, as split into 28 categories.

The guidelines reveal how it penalises news articles without bylines or otherwise transparent authorship. Facebook also surveys its users on whether they trust news publishers and demotes them if they are found to be “broadly untrusted”.

The platform also said it prioritises original stories. “The more extensive original reporting that an article contains, the more distribution it will receive in News Feed. Original reporting includes things such as exclusive source materials, significant analysis, new interviews or the creation of original visuals,” it said.

However Facebook still has not defined exactly how the post demotion happens, to what extent this affects post reach, or given specific examples.

Jason Hirsch, Facebook’s head of integrity policy, told The Verge the way in which different types of posts are demoted, such as spam versus health misinformation, would not be made public “for adversarial reasons”. He said the guidelines had been published to “give a clearer sense of what we think is problematic but not worth removing”.

Posts that breach Facebook’s community standards are treated separately and removed entirely.

Facebook’s dos and don’ts for publishers

Below we have summarised the restrictions shared by Facebook that we deem to be the most important for publishers to take into account.

Don’t be an ‘ad farm’ site

Facebook says users are “disappointed” when they click on a link that takes them to a page that interrupts their reading with ads or autoplaying video/audio and frequently reloads, starting the ads again.

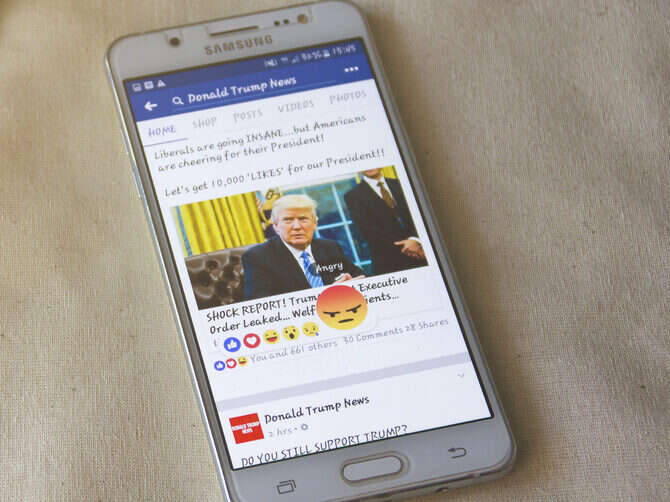

Don’t be clickbait

Don’t “lure” people into clicking a link by “creating misleading expectations” about its content with phrases link “You won’t believe…” or text in all capital letters or excessive exclamation marks.

Facebook says its users want to see headlines that accurately reflect content so they can make “informed decisions” about how they spend their time.

Don’t be ‘engagement bait’

These posts explicitly ask readers to vote, share, comment, tag or like a post.

This is only allowed when there is a specific call to action such as helping to find a missing person, sharing a petition or donating to charity.

Don’t request ‘unnecessary’ user data

Websites that ask for personal information before allowing readers to see content are marked down. This applies to requests for a user’s name, social media login, phone number, email address or postal address but does not apply when a site is behind a paywall.

Do have a high-quality browsing experience

Facebook penalises links to websites that are not particularly user-friendly, for example if they include poorly formatted mobile pages and perhaps include text that is difficult to read. This “disappoints” users, it said.

Don’t disguise the website domain

Links that disguise their destination by concealing the name of the landing page or web address are demoted as they can often lead to porn or other “problematic” content. This restriction does not include the use of URL shorteners.

Don’t try and dupe the Facebook Live video function

Facebook can tell when pages use the Live Video option to post pre-recorded video, static or animated images.

Facebook said: “These videos abuse the live video format policies, making them misleading to users or creating a degraded user experience. In addition, we want to ensure that ‘live broadcasts’ and ‘videos’ on Facebook are in fact live in nature or videos. If we confirm that a video violates our Facebook Live policies, it is removed.”

Don’t share sensationalist health claims

Facebook penalises health claims that it deems sensationalist, misleading, spammy or exaggerated.

Do original reporting

Facebook penalises websites that don’t do their own original reporting or analysis, especially if they largely publish totally lifted or only slightly modified content. It says the more original reporting in an article, the more extensively it will be shared in the News Feed.

It is looking for exclusive source materials, significant analysis, new interviews or the creation of original visuals.

“We value providing people with informative news. We understand that original reporting plays an important role around the world and takes time and expertise,” Facebook said.

Facebook does claim to recognise where wholesale articles are used from wire services such as PA and not penalise these.

Don’t spam stories into Facebook groups

Social media editors should not post too much in Facebook groups, reaching potentially “irrelevant” audiences, because if the platform figures it out, the page will be penalised.

Be trustworthy

Facebook surveys its users and demotes publishers that are deemed “broadly untrusted”. Conversely it boosts trusted publishers as rated in the same surveys.

Do include bylines or author information

Facebook wants articles to have bylines or, at the very least, for editorial staff information to be available on a publisher level.

It said those that don’t provide authorship information “often lack credibility to readers and produce content that people tell us they don’t want to see on Facebook”.

“Editorial transparency is also a professional standard supported by global media and journalism experts.”

Facebook said this measure is only in place in some countries at the moment to take into account that journalists in places with bad environments for press freedom can be at risk if they are identified.

Do cultivate other traffic sources

If a disproportionate amount of a website’s traffic is coming from Facebook, the platform realises this and sees it as a “sign that the domain is succeeding on News Feed in a way that doesn’t reflect the authority that domain has built on the internet more broadly, usually by boosting low-quality and less authoritative content, which people have told us they do not like”.

Don’t share debunked misinformation

Facebook will mark down content containing anything that has been marked as “false, altered or partly false” by its partner fact-checkers, such as Full Fact, Logically, Fact Check NI and Reuters in the UK. This content, where it is seen, will be labelled with a warning.

Picture: Jakraphong Photography/Shutterstock

Email pged@pressgazette.co.uk to point out mistakes, provide story tips or send in a letter for publication on our "Letters Page" blog